By Julián Cristia*

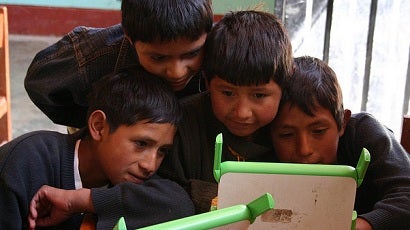

The One Laptop per Child (OLPC) program aims to improve education by providing one laptop to each primary-age child in the poorest areas in the world. The program has been implemented in 42 countries and more than 2 million laptops have been distributed. Complementary inputs, including training and support, are typically included in each deployment. The initiative has been especially popular in our region. In fact, 80% of OLPC laptops purchased worldwide have been distributed in Latin America and the Caribbean. The two leading cases are Uruguay, where virtually every primary schoolchild has received a laptop, and Peru, the largest purchaser of these laptops worldwide. However, OLPC is just the tip of the iceberg. If we also include laptops produced by private firms (such as Intel), current plans aim to distribute about 7 million laptops to public schools in the region (Severín and Capota, 2011). This commitment leads to a variety of questions. Most importantly, what are the impacts of these programs, and how can we maximize their benefits?

To shed some light on these questions we implemented the first large-scale experimental evaluation of the OLPC program. Comprehensive data collected from 319 primary schools in rural Peru after about 15 months of implementation were used to measure the effects on a range of dimensions. This project was the result of close collaboration between policymakers and researchers at the Dirección General de Tecnología Educativa (DIGETE) of the Ministry of Education in Peru, the Inter-American Development Bank, and the think tank GRADE. In joint work with my coauthors Santiago Cueto, Pablo Ibarrarán, Ana Santiago and Eugenio Severín, we produced a working paper reporting the results. The collected data is publicly accessible and can be used to produce new studies (click here to download the data).

Our main findings follow. First, the program generated a massive increase in computer access and use. At follow-up, there were 1.18 computers per student in the treatment group, compared with 0.12 in the control group. About 82% of beneficiary students used a computer at school in the previous week compared with 32% for non-beneficiaries; there were also sharp increases in computer use at home. Second, students in treatment schools showed general competence in performing certain tasks on the laptop, such as writing and formatting documents and making audio and video recordings. The development of early digital skills may bring positive long-term benefits if it reduces the costs of adopting new technologies later in life. Third, we found some positive effects in psychometric tests regularly used to measure intelligence. These effects are sizeable, equaling the gains that can be expected when a child ages 5 months. Finally, we found no evidence of effects on standardized tests in Math and Language or on enrollment.

Exploring the effects on intermediate variables can help us understand why these final effects arise. Regarding the positive effects on intelligence tests, computer logs extracted from laptops in the treatment group showed that about 20% of applications opened were educational games that could have positive effects on cognitive processes (for example, Sudoku, Tetris, and various puzzles). Similarly, the lack of effects on Math and Language is consistent with the absence of effects on attendance, time allocated to doing homework or reading. The absence of effects on reading behavior may be surprising given that the program increased markedly the availability of books to children. The laptops came loaded with 200 books, and only 26% of students in the control group reported having more than 5 books at home. Data from computer logs also showed little use in activities directly related to Math and Language. This may be due to the low availability in the distributed computers of software directly related the local curriculum (see here for an example on how to use certain programs for Math development). Alternatively, the fact that about 70% of teachers received the 40-hour prescribed training may have been a relevant factor.

The interpretation and implications of these results have been discussed in several online venues. Coverage in The Economist, Associated Press, Miami Herald and other media has emphasized different findings. Rodrigo Arboleda, Nicholas Negroponte and Claudia Urrea have provided the perspective from inside OLPC about the study. And posts by Oscar Becerra, Berk Ozler and Mike Trucano exemplify the reactions that have arisen in policy and academic circles. This discussion has been enriching but, ultimately, more research has to be conducted on the OLPC program and, more generally, on technology in education. Since technology is so versatile, we may expect differential impacts when the hardware, software, training, and support provided vary (see a new IDB study on home computer use). Moreover, the effective use of technology may require certain human capital skills that can vary across individuals, schools and over time.

Theoretically, it is difficult to deny the significant potential that technology can offer in educational settings. First, children are naturally attracted to the use of technology, and this motivation can be leveraged for educational purposes. Second, the ability of computers to personalize the educational experience and individually tailor content can be useful, especially in classrooms where there is substantial heterogeneity across students. Third, for certain exercises, computers can provide immediate feedback including not only whether an answer was correct but also explanations of why it was not correct. Finally, technology can produce at low cost real-time data on students’ outcomes and particular inputs (for example, hours using educational software) which can be informative for teachers, parents, school administrators, and students themselves.

The challenge ahead lies in identifying specific models of computer use by grade, subject, and context that can produce measurable learning gains. These educational models should lay out not only the hardware and software needed, but also—and very importantly—the training and support activities required so teachers can adopt them effectively. Identifying these models will require a substantial effort in research and development. That effort will surely build upon the available literature (see Cheung and Slavin, 2013 for a meta-analysis on the effects of technology on Math learning), but it will also involve adapting promising solutions to local contexts. Substantial experimentation and tweaking solutions in response to feedback will help maximize the chances of positive impacts. Before launching large-scale (and costly) randomized controlled trials, small-scale pilots could generate important insights for the development of promising solutions. Above all, this project will require close collaboration among policymakers, educators, technology specialists, and evaluators. It will be a risky endeavor but, if successful, it may lead to large gains in student learning, and down the road, to increases in productivity, wages and to reductions in poverty.

(First published on February 12, 2013 on Vox LACEA)

Julián Cristia holds a PhD in Economics from the University of Maryland, where his research focused on how fertility shapes female labor market outcomes. Before joining the IDB he worked as Associate Analyst in the Health and Human Resources Division of the Congressional Budget Office. His current research involves finding effective ways to accelerate human capital accumulation in Latin America. With this larger objective in mind, he has analyzed programs that introduced technology to improve education and health, contracted out health services and expanded access to pre-primary education. Cristia has also undertaken research in areas such as the private provision of child care services, the evolution of the mortality-income gradient and the price-elasticity of energy demand. His work has appeared in publications including the Journal of Human Resources and the Journal of Health Economics.

That is a great step to improve education in any area. keep up the good work. Thanks for sharing.

True that technology is a great help to educate children. This article is very interesting, thanks for posting.

That is a great step to improve education in any area.Thanks for sharing.