6 min read.

An organization’s understanding of its available knowledge is key for continual success, and ultimately for delivering more value to those it serves. Much of that knowledge is written down in text, which makes it a very valuable type of data; however, these days, the sheer quantity of most organizations data exceeds the manual capacity of its workers to fully connect the dots. 20 years ago, the International Data Corporation (IDC) estimated that knowledge workers spent up to 30% of their working hours just looking for information, and more recent research indicates that the time spent in this way may be even higher now. Data Scientists, for instance, spend approximately 82% of their time finding, cleaning, transforming, and managing data, which is consistent with our AI Iceberg model. That means only 18% of their working hours are dedicated to analysis, which presents a major challenge for organizations that need quality analysis to make decisions.

So, what is the solution? Training machines to automate data analysis processes. In order to fully take advantage of its knowledge, the modern organization must be capable of analyzing data at scale, not 10 documents at a time but a million documents. Natural Language Processing (NLP) techniques do exactly that.

Language is how we share knowledge

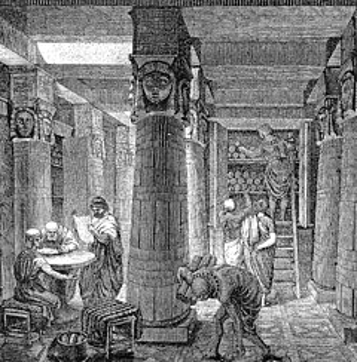

To understand the full value of Natural Language Processing we just need to look at the importance of language. At the earliest moments of our species, we lived in small groups, and gathered around the fire in the evenings to stay safe and to share stories. Those stories were full of knowledge and actionable insights that our ancestors learned through their experiences, and it was language that allowed that essential knowledge to be transferred between them. Over time, as societies grew, these stories began to be written down so that they could be shared far beyond our small groups and passed along to future generations. We needed ways to store and share this knowledge, and libraries began to appear, such as the Library of Ashurbanipal established in the 7th century BC, and the famed Library of Alexandria founded circa 250 BC, which was estimated to hold as many as 400,000 scrolls at one time.

This process continued to evolve over thousands of years and required regular adaptation of the methods used to organize and share ideas. The field of knowledge management was born out of this need to organize, share, and constantly adapt. One of the earliest mentions of the term was in the 1980s when Toyota, the car manufacturer, adopted a series of standard knowledge routines, such as having assembly line workers finishing a shift debrief with the worker about to start. The means for this knowledge transfer was, of course, language.

Over the last few decades, technology has advanced rapidly. We saw the adoption of databases, records management systems, taxonomies and complex ontologies to bring order to our information-saturated existence. Today, we live in a world where every day 300 billion e-mails are exchanged, and by 2025 the amount of data generated daily is expected to exceed 460 exabytes (equivalent to 460 billion gigabytes). The amount of text created in a single day by a modern organization can be magnitudes larger than the entire collection of words in the Library of Alexandria at its peak.

In the midst of this constant barrage of new data, we struggle to recreate how easy it was to share knowledge around that fire. We keep thinking that if we just have the right methodology, if we just have the right documents, if we just have the right software platform, that somehow, we can recreate that fire dynamic, which means we can finally get back to effectively sharing the information we need to do our jobs best. The reality, however, is that we just have too much data to realistically do this at scale.

NLP is key to manage organizational knowledge flows

So, how can Natural Language Processing help? NLP gives us the tools to train machines to mimic an understanding of words and language, which continues to be our central means of sharing knowledge.

Natural language processing techniques already play a role in your everyday life. Have you had a “conversation” with your bank through a chat bot? That’s NLP. Have you used autocomplete while typing a text on your phone? That’s NLP. Or think about what happens when you ask “Alexa” what time it is, and she answers 10pm: It heard your question, converted that sound into text, assessed what you were probably looking for, knew your location, and made sense of all that data to give you an accurate answer in 1 second! That’s NLP.

NLP is already being used by businesses every day to increase revenue and/or reduce costs. For instance, Globe Telecom in the Philippines, with more than 62 million customers, deployed a chatbot to take pressure off of its busy call center, which generated a 22% increase in customer satisfaction, a 50% reduction in calls to the hotline, and 350% increase in employee productivity. These techniques are also proving to be invaluable knowledge management tools that support knowledge flows within an organization. JPMorgan Chase, for instance, implemented a bot that was capable of reading legal documents and analyzing complex legal contracts, and as a result saved over 360,000 hours of manpower within its first 10 months. Another good example is the Argentine Ministry of Modernization, which developed an NLP tool, TextAr that reads the external questions that are sent to the Secretariat of Parliamentary Relations, and based on the content automatically categorizes them and generates a report for the appropriate party, saving many hours of reading these messages by hand.

At the IDB, using artificial intelligence is a centerpiece of our approach to knowledge management, and we leverage NLP techniques to develop tools that address knowledge management challenges, such as expertise location, summarizing a document corpus, organizing a body of knowledge to improve SEO, classifying large amounts of text, and creating a map of the organization’s jargon to identify untapped knowledge.

At the scale at which data is accumulating, NLP offers a radical, cost effective, approach to dealing with the massive amount of text that organizations generate every day. As such, modern knowledge management practitioners must understand how NLP techniques and tools can be used to support knowledge sharing, and how to incorporate them into the KM toolkit. Doing so will enhance our ability to generate scalable solutions that greatly augment knowledge management capacity and transform an organization’s existing data into valuable knowledge.

Let’s get back to that fire.

Natural language processing is a keystone of knowledge management in the digital age. What NLP techniques have you used to support knowledge flows within your organization?

By Kyle Strand, Senior Knowledge Management Specialist in the Knowledge and Learning Department of the Inter-American Development Bank

Leave a Reply